Building with AI means living with some tradeoffs. Switch models and your context drops. Start a new session and your agents reset. The platforms hosting your prompts aren't neutral infrastructure, they're learning from what you send, and they make leaving progressively more costly.

Ekai is building the infrastructure layer to fix this. Step one is already live.

Problem One: Access Control

People share access to AI models all the time, between friends, colleagues, side projects, but nobody wants to hand over their actual credentials.

With Ekai's Control Plane, you store encrypted API keys onchain via Sapphire and grant delegated access with fine-grained controls: model restrictions, spending limits, instant revocation. Decryption happens inside the TEE only. Credentials are never visible to anyone outside.

Six providers are currently live: OpenAI, Anthropic, Google, xAI, OpenRouter, and Groq. The gateway runs on ROFL.

Problem Two: Agent Context

Sharing model access is relatively easy. Sharing context is the next challenge.

When an AI agent works on a project for an hour, it accumulates code patterns, architectural decisions, debugging traces, file contents, tool outputs, and the reasoning connecting all of it. Today, that context lives in plaintext. It gets sent to whichever model you're using. It's lost when you switch providers. It's gone or hard to reference when you start a new session.

It degrades within a single session too. After 30 or 40 turns, the agent starts repeating itself, re-reading files it already checked, forgetting constraints from the beginning. Summarization helps, but it's lossy. Once a detail gets compressed away, the agent often can't recover it.

This is an infrastructure problem. And it gets worse as agents get more capable, because the context they accumulate gets more valuable over time.

What Ekai Is Building: Contexto

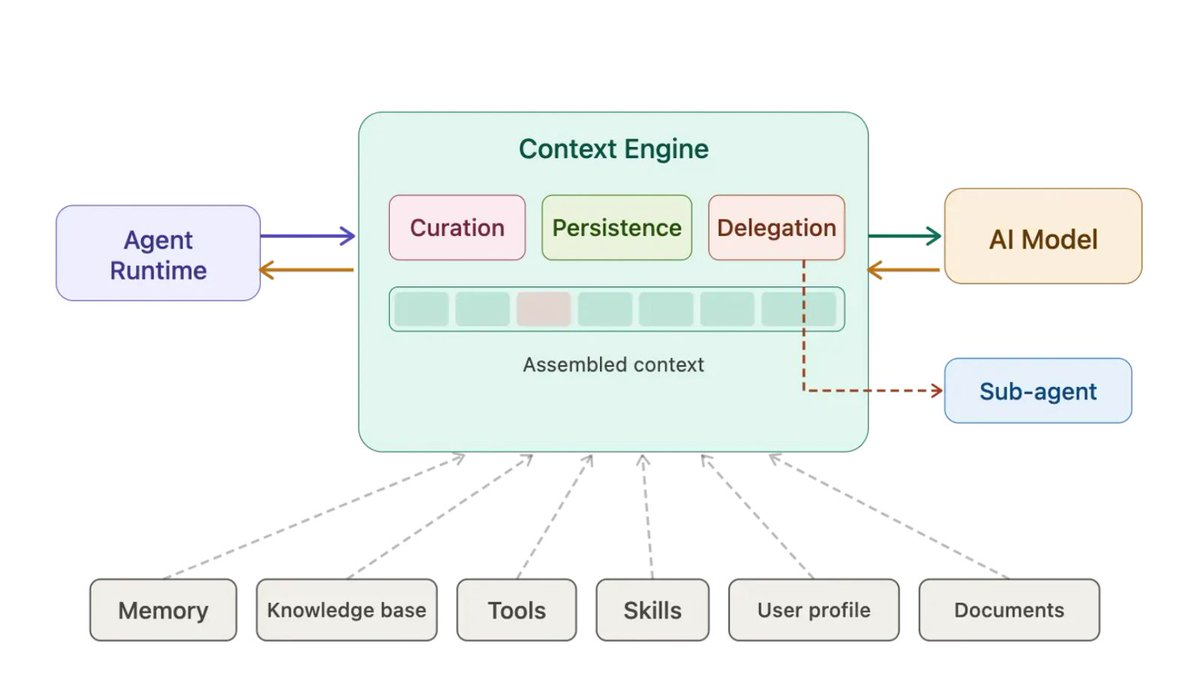

Contexto is a context layer for AI agents.

It keeps the main thread clean by storing and indexing older work, retrieving the right context automatically when the agent needs it. It runs subtasks in isolation, spawning scoped workers that handle messy jobs (log review, document comparison, branch investigation) and return structured results. And it recovers anything on demand. Nothing gets thrown away.

A concrete example: your agent is investigating a production bug. Without Contexto, it greps files, reads logs, makes API calls, and by turn 20 it's forgotten the original error. With Contexto, a scoped worker handles the investigation, reads 12 files, and sends back one line: "Auth service returning 401 due to expired cert on node-3." The main thread stays small. The full trace is there if you need it.

Contexto is built as an OpenClaw plugin, so no migration is necessary.

Why This Needs Oasis

A credential lets you access a model. Context is what the model produced while working for you. As sessions get longer, the context layer becomes a complete record of your project. Today, all of that lives in plaintext inside whatever provider you're calling.

Oasis solves this the same way it solved credential sharing. ROFL runs the context layer inside a TEE. Context is stored, indexed, and retrieved without ever being visible in plaintext outside the enclave. Sapphire enforces policy onchain: scoped context per agent, restrictions on sharing, provider controls, spending limits, and instant revocation.

Contexto manages the context. Oasis keeps it private.

What's Next

The Control Plane is live on mainnet. The context layer is also live as an OpenClaw plugin, with the next step being to bring the context store into the ROFL enclave so persistent context is private by default.

Beyond that, the target is agent-to-agent context routing, where your agent collaborates with someone else's, sharing the right context with the right boundaries, without either side exposing more than they need to. Learn more and try Contexto here.

.png)